This is a short experience report about building a tiny TUI/CLI to inspect configured agents and model assignments in Opencode.

I wanted a simple way to see which models were configured on which (sub)agents and their relative costs. I also did not want to spend much time building it. To me, this is an opportunity to try one-shotting it from a PRD.

Basically:

- which agents exist

- which models they use

- how much those models cost per 1M tokens

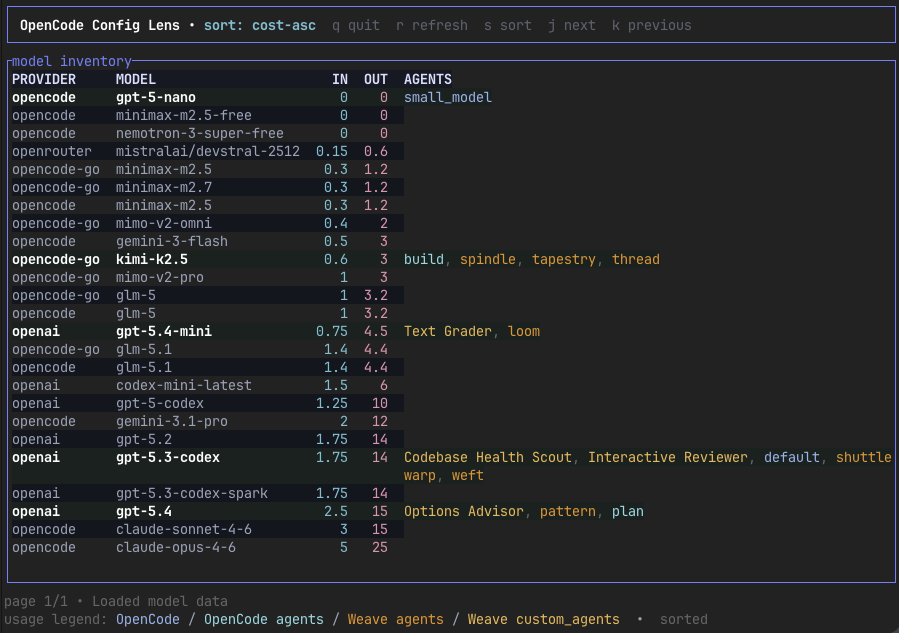

The result is a small CLI program called Opencode Config Lens.

In short, it scans ~/.config/opencode/ for the main config and can optionally include Weave config, which I am currently trialling. It then combines that with current pricing data from https://models.dev and renders a table that makes the configuration easier to inspect at a glance.

Vibe coded

The project was vibe-coded from a single PRD that I created in a back-and-forth with GPT-5.4. Asking the coding agent to implement the PRD produced a working program.

After the initial generation, I made a few more functional changes and refactors. I could not resist iterating, which is to be expected: building things tends to reveal new things. I also cleaned up a few things. I tell myself I am learning how LLMs work, or just trying not to release something too sloppy, but I am not sure I should not have stopped earlier.

So I ended up with a TUI

This is also my first experiment with building an actual TUI application (using Ratatui). It is probably overkill compared with a simple CLI table, but since I was instructing an LLM, the bar was low. Below is what it looks like.

What is interesting is that I only had to ask for a TUI instead of a text-based CLI in my original spec. I literally changed it to: “build me a TUI application.” Asking an LLM to make those changes did not feel much different from asking for changes to a text-based CLI.

Reflections

Despite the one-shot being fully functional, I kept iterating for a few more hours. I enjoyed it, but mostly because once you see the product, you get more ideas for improvement. Could I have built the CLI by hand in the same total amount of time? Probably not by much, and it would have been less good-looking and responsive. Definitely not a TUI.

I did not learn much about building a TUI or practising Rust, so there is that. But that was not really the goal. I wanted some basic insight, and I got that. I should probably get better at knowing when to stop.

This is also a program that probably would not have been written without LLMs. (I do appreciate the irony of using LLMs to build LLM tooling.)

Closing thought

The program does its job well. It looks good, and I still find it surprising how easily you can get to this result with an LLM and a coding agent. Although the TUI worked from the start, I kept iterating not to fix bugs but to make it clearer and better. That feels similar to more professional development: it's only when you see the product or build it hands-on that you learn, get ideas, and improve.